I'll make this a short one.

I was just having a conversation with a friend, a Rubyist whose opinion I respect, who clued me in that he really hates when JRuby users use Java libraries with little or no Ruby syntactic sugar. He hates that there's a better chance every day that Java-related technologies will enter his world. That he's going to have to fix someone's Java-like Ruby. He lamented the lack of decent wrapper libraries that hide "the Java insanity", that are just bare-metal shims over the Java classes they call. He expressed his frustration that JRuby being successful will mean he's going to have to deal with Java. He doesn't want to *ever* have to do that.

And he said it's our fault.

I've heard variations of this from other key Rubyists too. There's a lot of hate and angst in the Ruby community. Many of them are Java escapees, who long ago decided they couldn't tolerate Java as a language or were fed up dealing with some of the many failed libraries and development patterns it has spawned. Some of them are C escapees who've never quite been able to let go of C, be it for performance reasons or because of specific libraries they need. Some of them have been Rubyists longer than anything else (or maybe just longer than anyone else), and see themselves as the purists, the elite, the Ivory Tower, keepers of all that's good in the Ruby world and judge, jury, and executioner for all that's bad. In the end, however, there's one thing these folks share in common.

They think JRuby is a terrible idea.

Of course it's not everyone. I think the general Ruby populace still looks at JRuby as an interesting project...for Java developers. Or maybe just as a gateway to bring people into the community. A growing minority of folks, however, have managed to move beyond prejudices against Java to make new tools, applications, and libraries using JRuby that might not otherwise have been possible. And some folks are simply ecstatic about JRuby's potential.

Why is JRuby such a polarizing issue?

I don't see this in the Python community, for example, which might surprise some Rubyists. Pythonistas seem to have positively embraced both IronPython and Jython. There's no side-chatter at the conferences about the evils of anything with a J in it. There's no mocking slides, no jokes at Jython or IronPython developers' expense. No "Python elite" cliques actively working to shut Jython or IronPython out, or to discourage others from considering them. The community as a whole--Guido included--seems to be genuinely thankful for implementation diversity. Even if one of them does have a J in it.

What's different about these two communities? Why?

I work on JRuby. For the past 3-4 years, it has been my passion. There's been pain and there's been triumph: compatibility hassles; performance numbers steadily increasing; rewriting subsystems I swore I'd never touch like IO and Java integration. Over the past two years, I've put in four years' worth of work, writing compilers, rewriting JRuby's runtime, rewriting whole subsystems, speaking at conferences, staying up late nights (frequently ALL night) helping users on the JRuby IRC channel or mailing lists, and hacking, hacking, hacking almost all day, every day. For what? Because I want to infect the JRuby community with a new and more virulent strain of Java? Because I don't know any better?

I work on JRuby because I love Ruby and I honestly believe JRuby is one of the best things ever to happen to Ruby. JRuby takes a decade of Java dogma and turns it on its head. JRuby isn't about Java, it's about taking the best of the Java platform and using it to improve Ruby. It's about me and others working relentlessly, writing Java so you don't have to. It's about giving Ruby access to one of the best VMs around, to one of the largest collections of libraries in the world, to a pool of talented engineers who've written this stuff a dozen times over. Sure there's crap in the Java world. Sure the Java elite took power in the late 90s and started to jam a bunch of nonsense down our throats. Sure the language has aged a bit. That's all peripheral. JRuby makes it possible to filter out and take advantage of the good parts of the Java world without writing a single line of Java.

Tell me that's not a good idea.

I sympathize with my friend...I really do. I've not only seen a lot of really bad Ruby code come out of JRubyists, I've created some of it. Writing good code is hard in any language, but writing Ruby code that meets the Ivory Tower's standards is like trying to decipher J2EE specifications. If I have to listen to some speaker meditate on what "beautiful code" means one more time I think I'm going to kill someone. Yes, beauty is important. I have my idea of beautiful code and you have yours, and there may be a nexus where the two meet. But tearing into people who are trying to learn Ruby, trying to move away from Java, doing the best they can to meet the Ivory Tower's standards of "beauty"...well that's just mean. And it doesn't have to be that way. "Beauty" doesn't have to be Ruby's "Enterprise".

JRuby doesn't mean Java any more than MRI means C, Ironruby means C#, or Rubinius means C++ and LLVM. JRuby, like the other implementations, is a tool, an enabler, an alternative. JRuby does many things extremely well and others poorly, just like the other implementations. It's bringing new people into Ruby, and for that we should be thankful. It's pushing the boundaries of what you can do with Ruby, and for that we should be thankful. It's not about Java...it's about learning from the successes and mistakes of the past and using that knowlege to push Ruby forward.

So what do we do about JRuby users that start writing Java code in Ruby? We teach them. We help them. We don't slap a scarlet J on their chest and run them out of town. What do we do about shim layers over Java libraries? We build a layer on top of that shim that better exercises Ruby's potential, or we help build a new wrapper to replace the old. That's what Nick Sieger did with Warbler. That's what the Happy Campers are doing with Monkeybars and Jeremy Ashkenas did with Ruby-Processing. More and more people are recognizing that JRuby isn't a threat, doesn't represent the old world, doesn't mean Java...it means empowerment, it means standing on the shoulders of giants, and never having to leave Ruby.

I guess what it really comes down to is this:

The next time someone tries to cut down JRuby, tries to convince you it's a bad idea, to avoid it, to stay away from the evils of Java; the next time someone tears into a library author who hasn't learned the best way to utilize Ruby; the next time someone complains about a library that doesn't lend itself to reimplementation on the C-based implementations, doesn't hide the fact that it's wrapping Java code; the next time someone tries to convince you that JRuby is going to hurt the Ruby community...you tell them to remember this:

JRuby is not going away. More people try JRuby every day. As long as Rubyists who know "the way", who have learned how to create beautiful APIs and DSLs, who serve as the stars, the leaders of the Ruby community, setting standards for others to follow...as long as those people try to marginalize JRuby, treat it like a pariah, or convince others to do the same...

...it will only get worse.

Friday, September 05, 2008

The Elephant

Tuesday, September 02, 2008

A Few Thoughts on Chrome

I'm just reading through the comic and thought I'd jot down a few things I notice as I go. Take them for what they're worth, high-level opinions.

- Browsers single-threaded? Maybe 10 years ago. I routinely have a CPU-heavy JS running in Firefox and it doesn't stop me working in other tabs.

- Threading is hard. Let's go shopping. Or use processes. So now when I have 50 tabs open, it won't just be 50 idle tabs, it will be 50 idle processes all holding on to their resources, not sharing, not pooling. Hmm.

- The majority of sites I view don't have copious amounts of JS running constantly. In response to events, sure. On load, sure. But not sitting there churning away. So a process per tab for pages that are rendered once and executed once seems a little wasteful.

- Browsers eat more memory because of memory fragmentation, eh? Memory management is hard. Let's go shopping and use processes. Or use a compacting memory manager, eh? What year is this?

- Even without GC it's not difficult to use opaque handles instead of pointers so you can juggle memory around as needed. Perhaps this is the over-optimization of the C/C++ crowd still living large. "I can't afford to dereference ONE MORE POINTER to get at my data! I've got to be direct-fucking-to-the-metal!"

- Not particularly looking forward to seeing 50 Chrome processes in my process lists. The ability to see individual pages as processes and kill them separately does sound intriguing; not sure it's worth every damn tab being a process though.

- Fuzz testing for browsers is a great idea. Zed Shaw and I talked about doing something similar for Ruby implementations...a sort of "smart fuzzing" that sends them parsable but random input. I don't know why more testing setups don't fuzz.

- It will be interesting to see V8 versus TraceMonkey. Hopefully my minders will see there's some value in revisiting Rhino and bringing it up to date (it hasn't had any major work done in a long time, and could be a *lot* faster).

- Pattern-based code-generation behind the scenes is also good. Kresten Krab Thorup demonstrated something similar with Ruby on JVM where instance variables (and I think, method dispatch tables) could be promoted to temporary classes with real fields at runtime, making them a lot faster. I've prototyped something similar in JRuby, but until Java 7 it's expensive to generate and load lots of throwaway bytecode.

- Ahh, now they start talking about using better garbage collection technology. Perhaps the folks who decided processes were the only way to efficiently manage cross-tab memory shoulda had a V8?

- Dragging tabs between windows sounds pretty cool. Except that I actually find multiple browser windows to be a nuisance most of the time (and no, not because I can't drag tabs...because I generally browse everything full-screen and would have to search for the right window). Besides, I can drag tabs in Firefox already. I suppose the benefit in Chrome is they wouldn't have to reload. Do I care?

- I just dragged the Chrome comic to another window to test it and then spent 30 seconds trying to figure out where I dragged the Chrome comic. Multiple browser windows = fail.

- I'm glad browser address completion has been made "really compelling" finally.

- Finally someone makes local browser caching smart! I'd say probably 50% of the pages I visit never ever change, like online documentation. I never want to have to go to the net to view them if I don't have to, and if I'm not connected...dammit, just give me what's in the cache!

- On the other hand, there's privacy concerns too. Hopefully I can have full control over what is and is not cached and an easy way to flush it. I can imagine someone borrowing my browser for a moment and stumbling onto a non-public page I've cached.

- Opening an MRU page in a new tab is fine, but I'm almost always using the keyboard to open a tab. Hopefully this isn't going to get in the way of my immediately typing a place to go, which is usually why I open a tab.

- Ahh, there we go, an "incognito tab" for private browsing. Seems like a good way to go.

- Not sure I'm seeing the keyboard angle well-understood here. Hopefully that's not the case. As much as possible, I avoid the mouse, even when browsing. Attention to the keyboard crowd would go a long way, especially in geekier communities. Example: keyboard support in most Google apps is bafflingly arbitrary. Maybe that's a limitation of JS or the "old world" browsers. Maybe not. Still baffling.

- I hope there's going to be support for Java in Chrome. Seems like it would be a big win, since it already can share some data across processes (like the the class libraries), misbehaving applets wouldn't impact the rest of the process (which is admittedly a good case for process-based isolation...maybe use the presence of heavy JS, plugins, applets as the indication to process-isolate?), and I'd wager Google could do a cleaner job of integrating it (without it being intrusive) than other browsers have so far. Plus since plugins are already being offloaded to a separate process, that could be a single JVM kept warm (or separate JVMs, for more memory use but better isolation and sandboxing). Java ought to be one of the best-behaved plugin citizens...done right.

- I wonder how long it will be until Chrome gets Google API updates (like Gears) before the other browsers. I don't buy the "we just want to make the user experience all unicorns and lollipops" thing. There's a business motivation for Chrome. More ad exposure? A first-class deployment for Google APIs so more people write for them so more people use Chrome so ads are easier to channel?

- Ahh yes...we want them to be open standards. Maybe they will, maybe they won't. If they don't...here's where it gets squirrely...it's open source! Anyone can take what they want and put it in their own browser, right? Yeah, you and the other 100 plugins that only work in one type of browser. How quickly do they get gobbled up by others? You do realize it takes some dedicated resources to "take what you want" and maintain it, not to mention keeping it up to date with the original. A commitment to always supporting all browsers seems to be much harder once you've made a monetary commitment to building your own browser. Left hand, meet right hand.

- I won't ask the "why didn't you just help Firefox" question, since it's obvious and there's a million reasons why someone starts a new project. But I will ask why this project to "help all browsers become more powerful" is sprung on the world a day before the beta. There's a desire for exclusivity here or I'll eat my hat. Unicorns and lollipops would have required opening the project months ago, so "all browsers" could benefit from it *as it progressed* and contribute *as it progressed*. Open is not "open once we're ready to beta our product because we think it's nearing completion", it's "we're working on it now and want everyone to benefit from it as we move forward."

And I reserve the right to completely flip any of these opinions after the beta is released this evening...though I probably won't, since I can't run it (Windows only).

Update: I borrowed a friend's Windows machine to give Chrome a 15-minute try. Here's my additional 15-minute thoughts, so take them at face value.

- I hate installers that download additional stuff. When my friend and I first downloaded, we proceeded to walk away from the interwebs for some offline fiddling. Only then did we discover we didn't have the whole thing.

- Love the interface. It's almost too clean. Unfortunately I can guarantee I'd immediately clutter it up with bookmarks I need (want) one-click access to. Such is life. But I like starting from a blank slate first, rather than starting from a cluttered mess.

- Very fast, true to form. It also feels snappier than Firefox, but Firefox isn't known for it's blazing speed. Maybe feels faster than Safari. Of course, young products are always fast.

- Not quite a process per tab. It seems like tabs manually opened and presumably tabs opened from bookmarks do get their own processes. Tabs opened via right-clicking on the link and choosing to open in a new tab stay in-process. That's a reasonable way to reduce process load, since a good portion of the tabs I open are from existing pages like Reader or News. Unfortunately, this also means that a good portion of the tabs I open are not subject to the sandboxing or isolation touted as a key feature of Chrome.

- The developer pages list a Mozilla Java plugin wrapper among the included technologies. Yay! I did not get a chance to try it out (Windows rapidly started to piss me off again).

- I picked a tab at random to forcibly kill and the entire browser disappeared. I guess I picked the right one.

- This is a little worrisome:

All told it's about what I expected. Very clean, very polished, very young. I'm sure a lot of these issues will get shaken out during the beta. I do hope there's a way to turn off a tab-per-process, or I can't see myself ever wanting to run Chrome. I can see myself gathering several dozen Chrome processes in the course of a week. Process isolation for other aspects (like JS or plugins), no worries. I'm looking forward to an OS X version, and from looking at the Chrome developer pages it sounds like that isn't too far off. Perhaps marketroids pressured the team to get out a Windows version first, so they could make some headlines. Damn marketroids.

And as regular readers of my blog will tell you, I can be a bit salty about young up-and-coming technologies with a chip on their shoulders. Ignore that.

Sunday, August 31, 2008

A Duby Update

I haven't forgotten about my promise to post on FFI and MVM APIs, but I've been taking occasional breaks from JRuby (heaven forbid!) to get some time on on Duby.

What Is Duby?

Duby, for those who have not heard of it, is my little toy language. It's basically a Ruby-like static-typed language with local type inference and not a whole lot of bells and whistles. The goal for Duby (which is most definitely a working name...it will probably change), is to provide the all the best parts of Ruby syntax people are familiar with, but add to it:

- Written all in Ruby (and obviously the eventual plan would be to port it to Duby)

- Backend-agnostic (JVM is obviously my focus, but nothing stops someone from building an LLVM or CLR typer+compiler)

- Minimally-intrusive static typing (Duby infers types from arguments and calls, like Scala)

- Features missing from Java (Duby treats module inclusion and class reopening like defining extension methods in C#)

- A very pluggable type inference engine (Duby's "Java" typer is currently all of about 20 lines of code that plugs into the engine)

- A pluggable compiler (Duby will allow adding compiler plugins to turn str1 + str2 into concatenation or StringBuffer calls, for example)

- Absolutely no runtime dependencies (I want compiled output from Duby to be *done*, so there's no runtime library to lug along so it works; once compiled, there are no dependencies on Duby)

Now don't get me wrong, Java is a great language, but it's become a victim of its own success. While other languages have been adding niceities like local type inference, structural typing, closures and extension methods, Java's stayed pretty much the same. There have been no major language changes to Java since Java 5's additions of generics, enums, annotations, varargs, and a few other miscellaneous odds and ends. Meanwhile, I live in a torturous world between Ruby and Java, where I'd love to write everything in Ruby (too slow, too inexact for "stable layer" code), but must instead write everything in Java (with associated syntactic baggage and 20th-century language design). And so necessity dictates taking a new approach.

def fib(a => :fixnum)So here we see an example I've shown in previous posts, but with a twist. First off, it's almost exactly the same as the equivalent Ruby code except for the argument type declaration => :fixnum. The rest of the script is all vanilla Ruby, even down to the puts call at the bottom.

if a < 2

a

else

fib(a - 1) + fib(a - 2)

end

end

puts fib(45)

But all is not as it seems. This is not Ruby code.

The type declaration in the method def looks natural, but it's not actually parseable Ruby. I had Tom Enebo hack a change in to JRuby's parser (off by default) to allow that syntax. Duby originally had a syntax something like this, so it could be parsed by any Ruby impl:

But it's obviously a lot uglier.

def fib(a)

{a => :fixnum}

...

end

New Type Inference Engine

Ignoring Java for a moment we can focus on the type inference happening here. Originally Duby only worked with explicit Java types, which obviously meant it would only ever be useful as a JVM language. The use of those types was also rather ugly, especially in cases where you just want something "Fixnum-like". So even though I had a working Duby compiler several months ago, I took a step back to rewrite it. The rewrite involved two major changes:

- Rather than build Duby directly on top of JRuby's AST I introduced a transformation phase, where the Ruby AST goes in and a Duby AST comes out. This allowed me to build up a structure that more accurately represented Duby, and also has the added bonus that transformers could be built from any Ruby parse output (like that of ruby_parser).

- Instead of being inextricably tied to the JVM's types and type system, I rewrote the inference engine to be type-system independent. Basically it uses all symbolic and string-based type identifiers, and allows wiring in any number of typing plugins, passing unresolved nodes to them in turn. Two great example plugins exist now: a Math plugin that knows how to handle mathematical and boolean operators against numeric types like :fixnum (it knows :fixnum < :fixnum produces a :boolean, for example), and a Java plugin that knows how to reach out into Java's classes and methods to infer return types for calls out of Duby-space.

* [Simple] Learned local type under MethodDefinition(fib) : a = Type(fixnum)There's a lot going on here. You can see the MathTyper and JavaTyper both getting involved here. Since there's no explicit Java calls it's mostly the MathTyper doing all the heavy lifting. The inference stage progresses as follows:

* [Simple] Retrieved local type in MethodDefinition(fib) : a = Type(fixnum)

* [AST] [Fixnum] resolved!

* [Simple] Method type for "<" Type(fixnum) on Type(fixnum) not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "<" Type(fixnum) on Type(fixnum) = Type(boolean)

* [AST] [Call] resolved!

* [AST] [Condition] resolved!

* [Simple] Retrieved local type in MethodDefinition(fib) : a = Type(fixnum)

* [Simple] Retrieved local type in MethodDefinition(fib) : a = Type(fixnum)

* [AST] [Fixnum] resolved!

* [Simple] Method type for "-" Type(fixnum) on Type(fixnum) not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "-" Type(fixnum) on Type(fixnum) = Type(fixnum)

* [AST] [Call] resolved!

* [Simple] Method type for "fib" Type(fixnum) on Type(script) not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "fib" Type(fixnum) on Type(script) not found

* [Simple] Invoking plugin: #<Duby::Typer::JavaTyper:0x1635aad>

* [Java] Failed to infer Java types for method "fib" Type(fixnum) on Type(script)

* [Simple] Deferring inference for FunctionalCall(fib)

* [Simple] Retrieved local type in MethodDefinition(fib) : a = Type(fixnum)

* [AST] [Fixnum] resolved!

* [Simple] Method type for "-" Type(fixnum) on Type(fixnum) not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "-" Type(fixnum) on Type(fixnum) = Type(fixnum)

* [AST] [Call] resolved!

* [Simple] Method type for "fib" Type(fixnum) on Type(script) not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "fib" Type(fixnum) on Type(script) not found

* [Simple] Invoking plugin: #<Duby::Typer::JavaTyper:0x1635aad>

* [Java] Failed to infer Java types for method "fib" Type(fixnum) on Type(script)

* [Simple] Deferring inference for FunctionalCall(fib)

* [Simple] Method type for "+" on not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "+" on not found

* [Simple] Invoking plugin: #<Duby::Typer::JavaTyper:0x1635aad>

* [Java] Failed to infer Java types for method "+" on

* [Simple] Deferring inference for Call(+)

* [Simple] Deferring inference for If

* [Simple] Learned method fib (Type(fixnum)) on Type(script) = Type(fixnum)

* [AST] [Fixnum] resolved!

* [Simple] Method type for "fib" Type(fixnum) on Type(script) = Type(fixnum)

* [AST] [FunctionalCall] resolved!

* [AST] [PrintLine] resolved!

* [Simple] Entering type inference cycle

* [Simple] Method type for "fib" Type(fixnum) on Type(script) = Type(fixnum)

* [AST] [FunctionalCall] resolved!

* [Simple] [Cycle 0]: Inferred type for FunctionalCall(fib): Type(fixnum)

* [Simple] Method type for "fib" Type(fixnum) on Type(script) = Type(fixnum)

* [AST] [FunctionalCall] resolved!

* [Simple] [Cycle 0]: Inferred type for FunctionalCall(fib): Type(fixnum)

* [Simple] Method type for "+" Type(fixnum) on Type(fixnum) not found.

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xcc5002>

* [Math] Method type for "+" Type(fixnum) on Type(fixnum) = Type(fixnum)

* [AST] [Call] resolved!

* [Simple] [Cycle 0]: Inferred type for Call(+): Type(fixnum)

* [AST] [If] resolved!

* [Simple] [Cycle 0]: Inferred type for If: Type(fixnum)

* [Simple] Inference cycle 0 resolved all types, exiting

- Make a first pass over all AST nodes, performing trivial inferences (declared arguments, literals, etc).

- Add each unresolvable node encountered to an unresolved list.

- Cycle over that list repeatedly until either all nodes have resolved or the list's contents do not change from one cycle to the next.

Include Java

Anyway, back to Duby. Here's a more complicated example that makes calls out to Java classes:

import "System", "java.lang.System"Here we see a few new concepts introduced.

def foo

home = System.getProperty "java.home"

System.setProperty "hello.world", "something"

hello = System.getProperty "hello.world"

puts home

puts hello

end

puts "Hello world!"

foo

First off, there's an import. Unlike in Java however, import knows nothing about Java types; it's simply associating a short name with a long name. The syntax (and even the name "import") is up for debate...I just wired this in quickly so I could call Java code.

Second, we're actually making calls that leave the known Duby universe. System.getProperty and setProperty are calls to the Java type java.lang.System. Now the Java typer gets involved. Here's a snippit of the inference output for this code:

* [Simple] Method type for "getProperty" Type(string) on Type(java.lang.System meta) not found.The Java typer is fairly simple at the moment. When asked to infer the return type for a call, it takes the following path:

* [Simple] Invoking plugin: #<Duby::Typer::MathTyper:0xaf17c7>

* [Math] Method type for "getProperty" Type(string) on Type(java.lang.System meta) not found

* [Simple] Invoking plugin: #<Duby::Typer::JavaTyper:0x1eb717e>

* [Java] Method type for "getProperty" Type(string) on Type(java.lang.System meta) = Type(java.lang.String)

* [AST] [Call] resolved!

- Attempt to instantiate known Java types for the target and arguments. It makes use of the list of "known types" in the typing engine, augmented by import statements. If those types successfully resolve to Java types...

- It uses Java reflection APIs (through JRuby) to look up a method of that name with those arguments on the target type. From this method, then, we have a return type. The return type is reduced to a symbolic name (since again, the rest of the type inference engine knows nothing of Java types) and we consider it a successful inference. If the method does not exist, we temporarily fail to resolve; it may be that additional methods are defined layer that will support this name and argument list.

Next Steps

I see getting the JVM backend and typer working as two major milestones. Duby already can learn about Java types anywhere in the system and can compile calls to them. But mostly what works right now is what you see above. There's no support for array types, instantiating objects, or hierarchy-aware type inference. There's no logic in place to define new types, static methods, or to define or access fields. All this will come in time, and probably will move very quickly now that the basic plumbing is installed.

I'm hoping to get a lot done on Duby this month while I take a "pseudo-vacation" from constant JRuby slavery. I also have another exciting project on my plate: wiring JRuby into the now-functional "invokedynamic" support in John Rose's MLVM. So I'll probably split my time between those. But I'm very interested in feedback on Duby. This is real, and I'm going to continue moving it forward. I hope to be able to use this as my primary language some day soon.

Update: A few folks asked me to post performance numbers for that fib script above. So here's the comparison between Java and Duby for fib(45).

Java source:

public class FibJava {

public static int fib(int a) {

if (a < 2) {

return a;

} else {

return fib(a - 1) + fib(a - 2);

}

}

public static void main(String[] args) {

System.out.println(fib(45));

}

}Java time:➔ time java -cp . FibJavaDuby source:

1134903170

real 0m13.368s

user 0m12.684s

sys 0m0.154s

def fib(a => :fixnum)Duby time:

if a < 2

a

else

fib(a - 1) + fib(a - 2)

end

end

puts fib(45)

➔ time java -cp . fibSo the performance is basically identical. But I prefer the Duby version. How about you?

1134903170

real 0m12.971s

user 0m12.687s

sys 0m0.112s

Sunday, August 24, 2008

Zero to Production in 15 Minutes

There still seems to be confusion about the relative simplicity or difficulty of deploying a Rails app using JRuby. Many folks still look around for the old tools and the old ways (Mongrel, generally), assuming that "all that app server stuff" is too complicated. I figured I'd post a quick walkthrough to show how easy it actually is, along with links to everything to get you started.

Here's the full session, in all its glory, for those who just want the commands:

~/work ➔ java -Xmx256M -jar ~/Downloads/glassfish-installer-v2ur2-b04-darwin.jarNow, on to the full walkthrough!

~/work ➔ cd glassfish

~/work/glassfish ➔ chmod a+x lib/ant/bin/*

~/work/glassfish ➔ lib/ant/bin/ant -f setup.xml

~/work/glassfish ➔ bin/asadmin start-domain

~/work/glassfish ➔ cd ~/work/testapp

~/work/testapp ➔ jruby -S gem install warbler

~/work/testapp ➔ jruby -S gem install activerecord-jdbcmysql-adapter

~/work/testapp ➔ vi config/database.yml

~/work/testapp ➔ warble

~/work/testapp ➔ ../glassfish/bin/asadmin deploy --contextroot / testapp.war

Prerequisites

JRuby 1.1.3 or higher

None of the steps in the main walkthrough require JRuby, since Warbler works fine under other Ruby implementations. But if you want to install and test against the JDBC ActiveRecord adapters, JRuby's the way. And in general, if you're deploying on JRuby, you should probably test and build on JRuby as well. Go to www.jruby.org under "Download!" and grab the latest 1.1.x "bin" distribution. I link here JRuby 1.1.3 tarball and JRuby 1.1.3 zip for your convenience. Download, unpack, put in PATH. That's all there is to it.

Java 5 or higher

Most systems already have a JVM installed. Here's some links to OpenJDK downloads for various platforms, in case you don't already have one.

- Windows, Linux, Solaris: Download the JDK directly from Sun's Java SE Downloads page. I typically download the JDK (Java Development Kit) because I find it convenient to have Java sources, compilers, and debugging tools available, but this walkthrough should work with the JRE (Java Runtime Environment) as well. Linux and Solaris users should also be able to use their packaging system of choice to install a JDK.

- OS X: The 32-bit Intel macs can't run the Apple Java 6, so you'll want to look at the Soylatte Java 6 build for OS X to get the best performance. A small warning...it doesn't have Cocoa-based UI components, so it will use X11 if you start up a GUI app.

- BSDs: FreeBSD users should check the FreeBSD Java downloads page. I believe there's a port for FreeBSD and package/port for OpenBSD but I couldn't dig up the details. We have had users on both platforms, though, so I know they work fine.

- Others: There's basically a JDK for just about every platform, so if you're not on one of these just do a little digging. All you need to know is that it needs to be a full Java 5 or higher implementation.

Hopefully by now most of you are on a 2.x version of Rails. This walkthrough will assume you've got Rails 2.0+ installed. If you're using JRuby, it's a simple "jruby -S gem install rails" or if you've got JRuby's bin in PATH, "gem install rails" should do the trick. Note that the Warbler (described later) should work in any Ruby implementation, since it's just a packager and it includes JRuby.

Step One: The App Server

The words "Application Server" are terrifying to most Rubyists, to the point that they'll refuse to even try this deployment model. Of course, the ones that try it usually agree it's a much cleaner way to deploy apps, and generally they don't want to go back to any of the alternatives.

Much of the teeth-gnashing seems to surround the perceived complexity of setting a server up. That was definitely the case 5 years ago, but today's servers have been vastly simplified. For this walkthrough, I'll use GlassFish, since it's FOSS, fast, and easy to install.

I'm using GlassFish V2 UR2 (that's Version 2, Update Release 2) since it's very stable and by most accounts the best app server available, FOSS or otherwise. Not that I'm biased or anything. At any rate, it's hard to argue with the install process.

1. Download from the GlassFish V2 UR2 download page. The download links start about halfway down the page and are range from 53MB (English localization) to 93MB (Multilanguage) in size.

2. Run the GlassFish installer. The .jar file downloaded is an executable jar containing the installer for GlassFish as well as GlassFish itself. The -Xmx specified here increases the memory cap for the JVM from its default 64MB to 256MB, since the archive gets unpacked in memory.

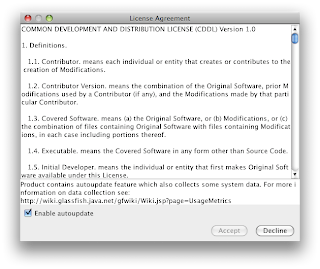

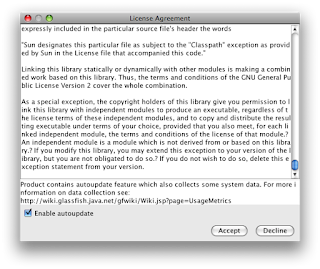

~/work ➔ java -Xmx256M -jar ~/Downloads/glassfish-installer-v2ur2-b04-darwin.jarBefore the unpack begins, the installer will pop up a GUI asking you to accept the GlassFish license.

glassfish

glassfish/docs

glassfish/docs/css

glassfish/docs/figures

...

glassfish/updatecenter/README

glassfish/updatecenter/registry/SYSTEM/local.xml

installation complete

~/work ➔

Read the license or not...it's up to you. But to accept, you need to at least pretend you read it and scroll the license to the bottom.

Read the license or not...it's up to you. But to accept, you need to at least pretend you read it and scroll the license to the bottom. The installer will proceed to unpack all the files for GlassFish into ./glassfish.

The installer will proceed to unpack all the files for GlassFish into ./glassfish.3. Run the GlassFish setup script. In the unpacked glassfish directory, there are two .xml files: setup.xml and setup-cluster.xml. Most users will just want to use setup.xml here, but if you're interested in clustering several GlassFish instances across machine, you'll want to look into the clustered setup. I won't go into it here.

The unpacked glassfish dir also contains Apache's Ant build tool, so you don't need to download it. If you already have it available, your copy should work fine, and the chmod command below--which sets the provided Ant's bin scripts executable--would be unnecessary. If you're on Windows, the bin scripts are bat files, so they'll work fine as-is.

Two items to note: you should probably move the glassfish dir where you want it to live in production, and you should run the installer with the version of Java you'd like GlassFish to run under. Both can be changed later, but it's better to just get it right the first time.

~/work ➔ cd glassfish4. Start up your GlassFish server. It's as simple as one command now.

~/work/glassfish ➔ chmod a+x lib/ant/bin/*

~/work/glassfish ➔ lib/ant/bin/ant -f setup.xml

Buildfile: setup.xml

all:

[mkdir] Created dir: /Users/headius/work/glassfish/bin

get.java.home:

setup.init:

...

jar-unpack:

[unpack200] Unpacking with Unpack200

[unpack200] Source File :/Users/headius/work/glassfish/lib/appserv-cmp.jar.pack.gz

[unpack200] Dest. File :/Users/headius/work/glassfish/lib/appserv-cmp.jar

[delete] Deleting: /Users/headius/work/glassfish/lib/appserv-cmp.jar.pack.gz

...

do.copy.unix:

[copy] Copying 1 file to /Users/headius/work/glassfish/config

[copy] Copying 1 file to /Users/headius/work/glassfish/bin

[copy] Copying 1 file to /Users/headius/work/glassfish/bin

...

create.domain:

[exec] Using port 4848 for Admin.

[exec] Using port 8080 for HTTP Instance.

[exec] Using port 7676 for JMS.

...

BUILD SUCCESSFUL

Total time: 29 seconds

~/work/glassfish ➔

~/work/glassfish ➔ bin/asadmin start-domainCongratulations! You have installed GlassFish. Simple, eh?

Starting Domain domain1, please wait.

Log redirected to /Users/headius/work/glassfish/domains/domain1/logs/server.log.

Redirecting output to /Users/headius/work/glassfish/domains/domain1/logs/server.log

Domain domain1 is ready to receive client requests. Additional services are being started in background.

Domain [domain1] is running [Sun Java System Application Server 9.1_02 (build b04-fcs)] with its configuration and logs at: [/Users/headius/work/glassfish/domains].

Admin Console is available at [http://localhost:4848].

Use the same port [4848] for "asadmin" commands.

User web applications are available at these URLs:

[http://localhost:8080 https://localhost:8181 ].

Following web-contexts are available:

[/web1 /__wstx-services ].

Standard JMX Clients (like JConsole) can connect to JMXServiceURL:

[service:jmx:rmi:///jndi/rmi://charles-nutters-computer.local:8686/jmxrmi] for domain management purposes.

Domain listens on at least following ports for connections:

[8080 8181 4848 3700 3820 3920 8686 ].

Domain does not support application server clusters and other standalone instances.

~/work/glassfish ➔

A few tips for using your new server:

- There's a web-based admin page at http://localhost:4848 where the admin login is admin/adminadmin by default. You'll want to change that password. Select "Application Server" on the left and then "Administrator Password" along the top.

- Poke around the admin console to get a feel for the services provided. You won't need any of them for the rest of this walkthrough, but you might want to dabble some day. And if you want to set up a connection pool later on (which ActiveRecord-JDBC supports) this is where you'll do it.

- Most folks will probably want to set up init scripts to ensure GlassFish is launched at server startup. That's outside the scope of this walkthrough, but it's pretty simple. I'll update this page (and it's equivalent on the JRuby Wiki) once I know more.

- GlassFish works just fine as a standalone server, but many users will want to proxy it through Apache or another web server. Again, this is outside the scope of this walkthrough, but it should be as simple as configuring a virtual host or a set of matching URLs to hit the GlassFish server at port 8080 (which is the default port for web applications). For apps I'm running, however, I just use GlassFish.

This step is made super-trivial by Nick Sieger's Warbler. It includes JRuby itself and provides a simple set of commands to package up your app, add a packaging config file, and more. In this case, I'll just be packaging up a simple Rails app.

Note that Warbler works just fine under non-JRuby Ruby implementations, since it's all Ruby code. But again, if you're deploying with JRuby, it's probably a good idea to test and build with JRuby as well.

~/work ➔ jruby -S gem install warblerAnd that's essentially all there is to it. You will get a .war file containing your app, JRuby, Rails, and the Ruby standard library. This one file is now a deployable Rails applications, suitable for any app server, any OS, and any platform without a recompile. The target server doesn't even have to have JRuby or Rails installed.

Successfully installed warbler-0.9.10

1 gem installed

Installing ri documentation for warbler-0.9.10...

Installing RDoc documentation for warbler-0.9.10...

~/work ➔ cd testapp

~/work/testapp ➔ ls .

README Rakefile app config db doc lib log public script test tmp vendor

~/work/testapp ➔ warble

jar cf testapp.war -C tmp/war .

~/work/testapp ➔

Step 3: Deploy your Application

There's two ways you can deploy. You can either go to the Admin Console web page, select "Web Applications" from the "Applications" category on the left, and deploy the file there, or you can just use GlassFish's command-line interface. I will demonstrate the latter, and I'm providing the optional contextroot flag to deploy my app at the root context.

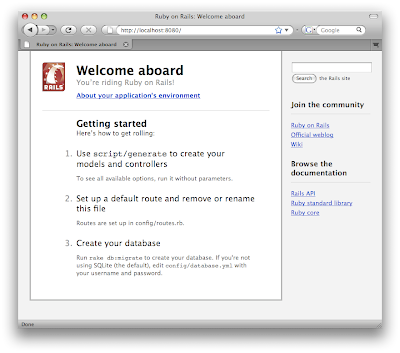

~/work/testapp ➔ ../glassfish/bin/asadmin deploy --contextroot / testapp.warThat's it? Yep, that's it! If we now hit the server at port 8080, we can see the app is deployed.

Command deploy executed successfully.

~/work/testapp ➔

Step 4: Tweaking

Step 4: TweakingThere's a few things you can do to tweak your deployment a bit. The first would be to generate a warble.rb config file and adjust settings to suit your application.

~/work/testapp ➔ warble configIn this file you can set the min/max number of Rails instances you need, additional files and directories to include, additional gems and libraries to include, and so on. The file is heavily commented, so you should have no trouble figuring it out, but otherwise the Warbler page on the JRuby Wiki is probably your best source of information. And since a lot of people ask how many instances they should use, I'll provide a definitive answer: it depends. Try the defaults, and scale up or down as appropriate. Hopefully with Rails 2.2 this will no longer be needed, as is the case for Merb and friends.

~/work/testapp ➔ head config/warble.rb

# Warbler web application assembly configuration file

Warbler::Config.new do |config|

# Temporary directory where the application is staged

# config.staging_dir = "tmp/war"

# Application directories to be included in the webapp.

config.dirs = %w(app config lib log vendor tmp)

...

The other tweak you'll probably want to look into is using the JDBC-based ActiveRecord adapters instead of the pure Ruby versions (or the C-based versions, if you're migrating from the C-based Ruby impls). This is generally pretty simple too. Install the JDBC adapter for your database, and tweak your database.yml. Here's the commands on my system:

~/work/testapp ➔ jruby -S gem install activerecord-jdbcmysql-adapterAnd the modified database.yml file:

Successfully installed jdbc-mysql-5.0.4

Successfully installed activerecord-jdbcmysql-adapter-0.8.2

2 gems installed

Installing ri documentation for jdbc-mysql-5.0.4...

Installing ri documentation for activerecord-jdbcmysql-adapter-0.8.2...

Installing RDoc documentation for jdbc-mysql-5.0.4...

Installing RDoc documentation for activerecord-jdbcmysql-adapter-0.8.2...

~/work/testapp ➔ vi config/database.yml

~/work/testapp ➔

...Now repackage ("warble" command again), redeploy, and you're done!

# This was changed from "adapter: mysql"

production:

adapter: jdbcmysql

encoding: utf8

...

Conclusion

Hopefully this walkthrough clears up some confusion around JRuby on Rails deployment to an app server. It's really a simple process, despite the not-so-simple history surrounding Enterprise Application Servers, and GlassFish almost makes it fun :)

Sunday, August 17, 2008

Q/A: What Thread-safe Rails Means

There's been a little bit of buzz about David Heinemeier Hansson's announcement that Josh Peek has joined Rails core and is about to wrap up his GSoC project making Rails finally be thread-safe. To be honest, there probably hasn't been enough buzz, and there's been several misunderstandings about what it means for Rails users in general.

So I figured I'd do a really short Q/A about what effect Rails thread-safety would have on the Rails world, and especially the JRuby world. Naturally there's some of my opinions reflected here, but most of this should be factually correct. I trust you will offer corrections in the comments.

Q: What does it mean to make Rails thread-safe?

A: I'm sure Josh or Michael Koziarski, his GSoC mentor, can explain in more detail what the work involved, but basically it means removing the single coarse-grained lock around every incoming request and replacing it with finer-grained locks around only those resources that need to be shared across threads. So for example, data structures within the logging subsystem have either been modified so they are not shared across threads, or locked appropriately to make sure two threads don't interfere with each other or render those data structures invalid or corrupt. Instead of a single database connection for a given Rails instance, there will be a pool of connections, allowing N database connections to be used by the M requests executing concurrently. It also means allowing requests to potentially execute without consuming a connection, so the number of live, active connections usually will be lower than the number of requests you can handle concurrently.

Q: Why is this important? Don't we have true concurrency already with Rails' shared-nothing architecture and multiple processes?

A: Yes, processes and shared-nothing do give us full concurrency, at the cost of having multiple processes to manage. For many applications, this is "good enough" concurrency. But there's a down side to requiring as many processes as concurrent requests: inefficient use of shared resources. In a typical Mongrel setup, handling 10 concurrent requests means you have to have 10 copies of Rails loaded, 10 copies of your application loaded, 10 in-memory data caches, 10 database connections...everything has to be scaled in lock step for every additional request you want to handle concurrently. Multiply the N copies of everything times M different applications, and you're eating many, many times more memory than you should.

Of course there are partial solutions to this that don't require thread safety. Since much of the loaded code and some of the data may be the same across all instances, deployment solutions like Passenger from Phusion can use forking and memory-model improvements in Phusion's Ruby Enterprise Edition to allow all instances to share the portion of memory that's the same. So you reduce the memory load by about the amount of code and data in memory that each instance can safely hold in common, which would usually include Rails itself, your static application code, and to some extent the other libraries loaded by Rails and your app. But you still pay the duplication cost for database connections, application code, and in-memory data that are loaded or created after startup. And you still have "no better" concurrency than the coarse-grained locking since Ruby Enterprise Edition is is just as green-threaded as normal Ruby.

Q: So for green-threaded implementations like Ruby, Ruby EE, and Rubinius, native threading offers no benefit?

A: That's not quite true. Thread-safe Rails will mean that an individual instance, even with green threads, can handle multiple requests at the same time. By "at the same time" I don't mean concurrently...green threads will never allow two requests to actually run concurrently or to utilize multiple cores. What I mean is that if a given request ends up blocking on IO, which happens in almost all requests (due to REST hits, DB hits, filesystem hits and so on), Ruby will now have the option of scheduling another request to execute. Put another way, removing the coarse-grained lock will at least improve concurrency up to the "best" that green-threaded implementations can do, which isn't too bad.

The practical implication of this is that rather than having to run a Rails instance for every process you want to handle at the same time, you will only have to run a certain constant number of instances for each core in your system. Some people use N + 1 or 2N + 1 as their metric to map from cores (N) to the number of instances you would need to effectively utilize those cores. And this means that you'd probably never need more than a couple Rails instances on a one-core system. Of course you'll need to try it yourself and see what metric works best for your app, but ultimately even on green-threaded implementations you should be able to reduce the number of instances you need.

Q. Ok, what about native-threaded implementations like JRuby?

A. On JRuby, the situation improves much more than on the green-threaded implementations. Because JRuby implements Ruby threads as native kernel-level threads, a Rails application would only need one instance to handle all concurrent requests across all cores. And by one instance, I mean "nearly one instance" since there might be specific cases where a given application bottlenecks on some shared resource, and you might want to have two or three to reduce that bottleneck. In general, though, I expect those cases will be extremely rare, and most would be JRuby or Rails bugs we should fix.

This means what it sounds like: Rails deployments on JRuby will use 1/Nth the amount of memory they use now, where N is the number of thread-unsafe Rails instances currently required to handle concurrent requests. Even compared to green-threaded implementations running thread-safe Rails, it willl likely use 1/Mth the memory where M is the number of cores, since it can parallelize happily across cores with only "one" instance.

Q: Isn't that a huge deal?

A: Yes, that's a huge deal. I know existing JRuby on Rails users are going to be absolutely thrilled about it. And hopefully more folks will consider using JRuby on Rails in production as a result.

And it doesn't end at resource utilization in JRuby's case. With a single Rails instance, JRuby will be able to "warm up" much more quickly, since code we compile and optimize at runtime will immediately be applicable to all incoming requests. The "throttling" we've had to do for some optimizations (to reduce overall memory consumption) may no longer even be needed. Existing JDBC connection pooling support will be more reliable and more efficient, even allowing connection sharing from application to application as well as across instances. And it will put Rails on JRuby on par with other frameworks that have always been (probably) thread-safe like Merb, Groovy on Grails, and all the Java-based frameworks.

Naturally, I'm thrilled. :)

Thursday, August 07, 2008

'Twas Brillig

It's been a long time since I posted last. I figured it was time to get at least a basic update out to folks.

JRuby's been clicking along really well. Perhaps a little too well. Our bug tracker counts 476 open bugs and climbing, even while we're trying to periodically sweep through them. We're slipping behind, but there's a silver lining: every other bug is filed by someone new. So there's happiness in slavery.

Since my last post, a lot has happened. We pushed out JRuby 1.1.3 around the middle of July, which boasted a whole bunch of new awesome. Vladimir Sizikov posted a really nice rundown of the changes.

My favorite item has to be the improved interpreter performance, which now is clearly faster than MRI's interpreter. So whether you're running interpreted or compiled in JRuby, you should be getting pretty solid straight-line performance.

There were also dozens upon dozens of compatibility fixes, an upgrade to RubyGems 1.2, and lots of other miscellaneous changes to make JRuby a little friendlier and easier to use. It's nice to be able to focus on that kind of stuff now that compatibility is mostly a done deal (ok, high 90th percentile...but pretty darn good).

So then...what's on the slate for the next JRuby release?

Making the Leap?

We had originally been talking about JRuby 1.1.3 being the last planned release in the 1.1 line. It had reached a really solid level of stability and performance, people were putting it in production for all sorts of apps, and we were generally very happy with it. "Last planned release" doesn't mean we wouldn't continue to do maintenance releases as needed...it just means we weren't going to do day-to-day development against the 1.1 line.

The primary reason for this is a number of large-scale projects we'd been putting off. For example, the "Java Integration" (JI) subsystem of JRuby has long been a serious thorn in our sides. Based off probably a dozen different people's contributions over nearly eight years, it had become an impenetrable maze of code, half in Java, half in Ruby, but all clearly in the "suck" column. And I'm not saying anything negative about the contributors who wrote all that suckage (which includes me)...they all made valiant attempts to improve the situation. But a dozen 5% solutions had led us down an ever-more-terrifying rabbit hole. We faced the ultimate decision: fix or rewrite?

We were all set to rewrite. I'd already started an experiment I called "MiniJava", a mostly code-generated replacement for JI that boasted dynamic dispatch speed no slower than Ruby to Ruby and static dispatch speed (from Java into Ruby) only about two times slower than Java calling Java. There were many levels of awesome there, but one serious problem: no tests.

JRuby's JI layer has evolved over a long period of time, and has been worked on by a mix of TDD fans and non-fans. There have also been numerous attempts to rewrite portions of the system, usually ending far short of intentions and with no new tests for functionality added along the way. As a result, any attempt to replace the JI layer would be an exercise in pain: even *we* didn't know how it all works. It's probably not exaggerating to say that existing test cases covered less than half of JI functionality, and that the current JRuby team probably understood less than half of JI's implementation. Not a great place to start from.

Faced with the certainty of pain and knowing, workaholic that I am, that a large portion of the rewrite would probably fall on my shoulders, I decided to give it one more go. I decided to attempt an in-place refactoring of JRuby's Java Integration layer, tens of thousands of lines of spaghetti Java and Ruby code.

Redemption

I've had false starts before. The compiler had at least two partial attempts that failed to go anywhere. I've rewritten the JRuby interpreter several times. Hell, I can't count the number of times I tried to refactor JRuby's IO subsystem before finally succeeding. But JI...man, that is some seriously heinous code. So I had to start small. How about something I was already intimately familiar with: method dispatch.

puts "Measure bytelist appends (via Java integration)"

5.times {

puts Benchmark.measure {

sb = org.jruby.util.ByteList.new

foo = org.jruby.util.ByteList.plain("foo")

1000000.times {

sb.append(foo)

}

}

}

puts "Measure string appends (via normal Ruby)"

5.times {

puts Benchmark.measure {

str = ""

foo = "foo"

1000000.times {

str << foo

}

}

}

Here's one of our JI benchmarks. The idea here is that since JRuby's String type is backed by a ByteList, appending to a ByteList through Java integration should be roughly equivalent in execution cost to appending to a String. Or at least, that would be the ideal situations. It was not, however, the case in JRuby 1.1.3.

JRuby 1.1.3

Measure bytelist appends (via Java integration)

3.580000 0.000000 3.580000 ( 3.579149)

2.551000 0.000000 2.551000 ( 2.551251)

2.615000 0.000000 2.615000 ( 2.614461)

2.505000 0.000000 2.505000 ( 2.505265)

2.715000 0.000000 2.715000 ( 2.715380)

Measure string appends (via normal Ruby)

0.290000 0.000000 0.290000 ( 0.289629)

0.247000 0.000000 0.247000 ( 0.246666)

0.259000 0.000000 0.259000 ( 0.259266)

0.250000 0.000000 0.250000 ( 0.250354)

0.253000 0.000000 0.253000 ( 0.253113)

Ouch. Ten times worse? Sure, I could see it being a couple times worse; after all, going from Ruby code into a Ruby type and back out to Ruby code should be faster than Ruby to Java to Ruby, since we're talking about leaving the controlled world of JRuby core and coming back. But not ten times worse.

Armed with this and a few other benchmarks, I started attacking the dispatch path for Java calls. Largely the optimizations made were the same ones we'd done over the past year for Ruby code: avoid boxing arguments in arrays when possible; clean up and simplify overloaded method selection; as much as possible eliminate constructing any objects not directly used in the eventual reflected call. And early returns started to look really good. Once the basics of the new call logic were in place, performance started to look a lot better.

JRuby trunk

Measure bytelist appends (via Java integration)

1.490000 0.000000 1.490000 ( 1.489711)

0.658000 0.000000 0.658000 ( 0.657639)

0.646000 0.000000 0.646000 ( 0.645717)

0.638000 0.000000 0.638000 ( 0.637849)

0.603000 0.000000 0.603000 ( 0.602439)

Measure string appends (via normal Ruby)

0.312000 0.000000 0.312000 ( 0.312046)

0.232000 0.000000 0.232000 ( 0.231591)

0.243000 0.000000 0.243000 ( 0.242401)

0.235000 0.000000 0.235000 ( 0.235499)

0.232000 0.000000 0.232000 ( 0.231688)

That's more like it...less than three times slower than one of our fastest Ruby-based calls. Other benchmarks showed similar improvement.

JRuby 1.1.3

Measure Integer.valueOf, overloaded call with a primitive

2.384000 0.000000 2.384000 ( 2.383484)

2.167000 0.000000 2.167000 ( 2.167232)

2.191000 0.000000 2.191000 ( 2.191187)

2.212000 0.000000 2.212000 ( 2.211901)

2.202000 0.000000 2.202000 ( 2.202460)

JRuby trunk

Measure Integer.valueOf, overloaded call with a primitive

0.635000 0.000000 0.635000 ( 0.635259)

0.470000 0.000000 0.470000 ( 0.470315)

0.471000 0.000000 0.471000 ( 0.471354)

0.468000 0.000000 0.468000 ( 0.467723)

0.468000 0.000000 0.468000 ( 0.467589)

Here's an example of where improving overload selection made a huge difference. Previously, every time Ruby code called an overloaded Java method, we built up an ArrayList of all argument types, used that to get an aggregate hashcode, used the hashcode to see whether there was a cached previous match, and otherwise went through a brute-force search to match incoming types to outgoing Java signatures.

Wait a second. We constructed an ArrayList just to get a hashcode based on its contents? That dog won't hunt, Monsignor.

So I replaced that logic with a five-line method that aggregates the type's hashcodes into an uber-hashcode, which is then used as the cache key. Combine that with specific-arity searches (avoiding argument boxing in arrays) and search logic that understands Ruby objects (avoiding pre-coercing each argument to potentially the wrong type), and hey, we're starting to see the light.

Keeping it Real

Once it became apparent that a refactoring was most definitely possible, even if it took some hard work and a lot of dedication, we decided to put out a 1.1.4 release, focusing primarily on Java integration, but as always including peripheral bug fixes and performance improvements. Having made that decision, the JI job suddenly became a lot more important. I vowed that 1.1.3 would be the last release to contain a JI layer we were silently afraid of.

Then there was the matter of existing users. Since the beginning of the year, more and more folks have been branching out from the core Rails world that had been JRuby's bread and butter into "Java scripting" sorts of applications. Probably the most prominent ones are the team at Happy Camper Studios, who not only built a real-world-practical Swing framework for JRuby (see MonkeyBars) and a library for packaging up JRuby-based Ruby apps as single-file executables (see Rawr), but who also were pushing the boundaries of what Ruby and JRuby are capable of (see RailGun, and probably see a lot more in the near future). And to top it off, they were releasing MonkeyBars-based apps commercially...making their living depending on JRuby's JI layer.

Would it be a good decision to leave them with the old code for six months while we do a rewrite? I'll let you think about that for a bit.

More Results

Another area that needed serious work was juggling Ruby and Java arrays. The logic for coercing a Ruby array into a Java array was implemented half in Ruby and half in Java, neither half being very efficient in themselves. Add to that the constant back and forth, and you have a recipe for disaster. With another multi-day effort, I managed to wrestle the code to one side of the fence, refactor it, and improve performance over twenty times.

Code

require 'java'

require 'benchmark'

TIMES = (ARGV[0] || 5).to_i

TIMES.times do

Benchmark.bm(30) do |bm|

bm.report("control") {a = [1,2,3,4]; 100_000.times {a}}

bm.report("ary.to_java") {a = [1,2,3,4]; 100_000.times {a.to_java}}

bm.report("ary.to_java :object") {a = [1,2,3,4]; 100_000.times {a.to_java :object}}

bm.report("ary.to_java :string") {a = [1,2,3,4]; 100_000.times {a.to_java :string}}

end

end

JRuby 1.1.3

user system total real

control 0.013000 0.000000 0.013000 ( 0.013130)

ary.to_java 7.523000 0.000000 7.523000 ( 7.522787)

ary.to_java :object 7.794000 0.000000 7.794000 ( 7.794777)

ary.to_java :string 9.905000 0.000000 9.905000 ( 9.905805)

JRuby trunk

user system total real

control 0.009000 0.000000 0.009000 ( 0.009548)

ary.to_java 0.240000 0.000000 0.240000 ( 0.239946)

ary.to_java :object 0.248000 0.000000 0.248000 ( 0.247385)

ary.to_java :string 0.418000 0.000000 0.418000 ( 0.418230)

You can find similar improvements on trunk for object construction, interface implementation, and several other areas. Work continues, but performance is starting to look way better.

Don't You Think About Anything But Performance?

Of course a side effect of simplifying the code for performance reasons is that fixing bugs and adding features becomes a lot easier. Check out a tiny bit of new logic that works now on JRuby trunk:

# "closure conversion" was only supported for instance methods before

thread = java.lang.Thread.new { puts 'Wahoo!' }

thread.start

thread.join

# output: 'Wahoo!'

Of course this is a somewhat contrived example, but there are some obviously useful examples too:

javax.swing.SwingUtilities.invoke_later { puts "Yay, Swing!" }

# script terminates, but the Swing event thread keeps running until it's fired our blockThe ability to pass a block to "any method" that accepted an interface as its last parameter was only functional for instance methods in all previous releases of JRuby. After the refactoring, expanding it to constructors and static methods took about 5 minutes. With tests. We'll come back to that in a moment.

The simplification of the code also means we'll probably be able to fix a bunch of Java Integration bugs we've punted on for months. For example, it's been a long-standing bug that you can't implement a Java interface in Ruby by defining the underscored versions of that interface's method names. But now, with newly rewritten JI interface-implementation code, it should be a snap to add that feature. We're planning to do a JI bug audit for 1.1.4 and knock down as many long-standing issues as we can.

Proving It Works

So back to the tests for a moment.

Some months ago we managed to get a baseline suite of RSpec specs into the JRuby development process thanks to Nick Sieger. Initially, the tests were very sparse, only a few specific things Nick had a chance to put together. But as interested community members started sending in patches, we started to grow a nice little suite.

As I've been working on the refactoring, I've been trying to "test along" as I learn how bits of JRuby's JI layer function. And so I've added a number of new specs for type coercion, interface implementation, method dispatch and overload selection, and others. Ola Bini came out of hiding to contribute a pretty comprehensive set of Array specs, which were a great help during the slaying of the Array-coercion dragon. So we're finally getting that suite we'd always needed, and once the refactoring is done we'll be in a far better position to start taking JI to the next level.

What's Next?

At the moment the refactoring is maybe 25% along. I'm the only one working on it, and it's a crapload of code, so it's going to take a little time. But most key performance bottlenecks have been remedied at this point.

There are a few areas that remain to be tackled:

- Refactoring the extensive logic governing how Ruby classes can extend abstract or concrete Java classes. Kresten Krab Thorup contributed this well over a year ago, and it's kinda been an island unto itself. It will take a considerable effort to rework.

- Moving the remaining core JI logic from Ruby into Java. I know, turtles and all that...but I've seen enough projects try to do large-scale refactorings in Ruby to know that the tools and techniques simply aren't there yet. By moving this logic into Java, where it belongs, we'll probably be able to delete 90% of it. That means more room for performance and features, and a much higher likelihood that future enhancements can safely live in Ruby without incurring a severe performance penalty.

- Eliminating the old "lower level" and "higher level" Java integration layers. Originally, JRuby's JI was implemented as a set of reflection-like Ruby classes wrapping Java's reflection classes (which comprised the "lower level") and a substantial amount of Ruby code that juggled these reflected bits to represent Java types and make Java calls (the so-called "higher level"). While conceptually this makes some sense, in practice it meant that any call from Ruby to Java actually ended up as dozens, maybe hundreds of Ruby invocations before the target Java method could be invoked. Performance improvements over the past four years were able to improve matters, but largely the only substantial gains have come from, and will continue to come from, eliminating the two separate levels entirely.

I intend for all of this to be in place for JRuby 1.1.4, which we want to release this month.

Next Time on Headius: Tune in for my next post, hopefully soon, about two Rubinius APIs we've added for 1.1.4: Multiple VMs (MVM) and Foreign Function Interface (FFI).

Monday, August 04, 2008

libdl _dl_debug_initialize problem solved

I'm posting this to make sure it gets out there, so nobody else spends a couple days trying to fix it.

Recently, after some upgrade, I started getting the following message during JRuby's run of RubySpecs:

Inconsistency detected by ld.so: dl-open.c: 623: _dl_open: Assertion

`_dl_debug_initialize (0, args.nsid)->r_state == RT_CONSISTENT' failed!

I narrowed it down to a call to getgrnam in the 'etc' library, which we provide using JNA. Calling that function through any means caused this error.

My debugging skills are pretty poor when it comes to C libraries, so I mostly started poking around trying to upgrade or downgrade stuff. Most other posts about this error seemed to do the same thing, but weren't very helpful about which libraries or applications were changed. Eventually I got back to looking at libc6 versions, to see if I could downgrade and hopefully eliminate the problem, when I saw this text in the libc6-i686 package:

WARNING: Some third-party binaries may not work well with these

libraries. Most notably, IBM's JDK. If you experience problems with

such applications, you will need to remove this package.

Nothing else seemed to depend on libc6-i686, so I removed it. And voila, the problem went away.

This was on a pretty vanilla install of Ubuntu 7.10 desktop with nothing special done to it. I'm not sure where libc6-i686 came from.

On a side note, sorry to my regular readers for not blogging the past month. I've been hard at work on a bunch of new JRuby stuff for an upcoming 1.1.4 release. I'll tell you all about it soon.

Friday, June 27, 2008

JRuby Japanese Tour 2008 Wrap-Up!

Whew! I survived the insanity of the JRuby Japanese Tour 2008, and now it's time to report on it. This post will mostly be a blow-by-blow account of the trip, and I'll try to post more in-depth thoughts later. I am still in Tokyo and need to repack my luggage, so this will be brief.

Day 1

- Left my warm, sunny vacation in Michigan to board flight #1 from Chicago to Minneapolis

- First-class upgrade for Chicago-Minneapolis flight. Whoopie...it's like an hour flight.

- Just enough time in Minneapolis airport to change some money. Hopefully 20k ¥ will be enough. Compatible cash machines are hard to come by in Japan.

- Twelve-hour flight #2 from Minneapolis to Narita. Glad I moved to a window seat facing a bulkhead, since I was able to stretch out and sleep most of the flight.

- Arrived in Narita without event; purchased bus ticket to Tsukuba and rented a cell phone.

- Bus ride to Tsukuba went through some very nice countryside.

- Arrived in Tsukuba, walked about five minutes to the hotel and ran into Chad Fowler, Evan Phoenix, Rich Kilmer and others gathering in the lobby.

- Checked into my room, dropped off my stuff, went back down to lobby to find other American rubyists had taken off for dinner. Teh suck. Ate unimpressive dinner alone in hotel restaurant.

- Ruby Kaigi day one.

- Sun folks provided a JRuby t-shirt, which was great because I forgot to pack one of mine!

- Delivered JRuby presentation, and it went very well. Demos almost all worked perfectly, lots of questions showed people were impressed.

- Met up with Sun guys at Kaigi booth for a bit. They were giving away little Duke+Ruby candies! Awesome!

- At evening event, lots of discussion about JRuby, and I got to show off Duby a little bit too.

- Ruby Kaigi day two.

- Some good talks, but definitely a "day two" slate.

- Especially liked Naoto Takai and Koichiro Ohba's enterprise Ruby talk. Very pragmatic, hopefully very helpful for .jp Rubyists.

- Met up with ko1 and Prof Kakehi from Tokyo University to discuss progress of MVM collaboration.

- Had to leave before Reject Kaigi to transfer to a hotel near Haneda Airport.

- Dinner at Haneda Airport with Takashi Shitamichi of Sun KK.

- Stayed at nearby comfortable JAL Hotel, basically JAL's airport hotel.

- Quick breakfast at Haneda Airport; "morning set" included a half-boiled egg, toast, salad, coffee.

- Flight #3 from Haneda to Izumo. Upgraded for 1000¥ to first class.

- Takashi and I met Matsue folks at Izumo airport for a short ride to Matsue.

- Met up with NaCl folks including Matz, Shugo Maeda, and others.

- Listened to a presentation in English about a large Ruby app migrated from an old COBOL mainframe app. JRuby used to interface Ruby with reporting solutions.

- Lunch box at NaCl after presentation.

- Played Shogi with Shugo after lunch. Shugo beat me pretty handily. He said I was strong (but I think he was just being polite).

- Played Igo (Go) with Hideyuki Yasuda. He played with a 9-stone handicap and was winning when I had to leave. He invited me to join NaCl Igo club on KGS and said he'd like to continue the game online.

- Delivered a lecture on JRuby with Takashi at Shimane University. Received some good questions, but it was a tough crowd (kids just starting out in CS).

- Took a quick tour of Matsue Castle with Takashi and a personal guide. Basically ran to the top, looked around, and ran back down. Back to work!

- Evening event at office of the "Ruby City Matsue" project. While everything was being set up, demonstrated JRuby/JVM/HotSpot optimizations for NaCl folks.

- Delivered the opening toast for the evening event. Barely had time to eat between questions from folks. Showed off Duby and more HotSpot JIT-logging coolness.

- Post-event trip to local Irish pub. Many Guinness were drunk. Many Rubyists were drunk.

- Walked back to hotel earlier than others to get some sleep.

- Took a bus from Matsue back to Izumo Airport.

- Flights #4 and #5 took me from Izumo to Haneda and Haneda to Fukuoka. Saw Mount Fuji from the air, poking just above the clouds.

- Takashi and I missed flight #5, so we were delayed about an hour.

- Arrived in Fukuoka, immediately raced over to Ruby Business Commons event. Delivered presentation to an extremely receptive crowd.

- Post RBC event included beer, various dried fishy things, and lots of photo-taking and card-exchanging. Showed off Duby again, and met authors of an upcoming Japanese book on JRuby!

- Invited out for more drinking (famous Fukuoka Shouchu), but Takashi wisely told them we were very tired.

- Breakfast at Izumo airport. "Honey toast" and coffee. Basically a thick slice of toast with butter and honey.

- Flight back to Tokyo (Haneda) from Izumo.

- Checked into Cerulean Tower Hotel in Shibuya, my final home for the trip.

- Off to Shinagawa to present JRuby at Rakuten's offices. Across the street from Namco/Bandai! Lots of great questions...I think they were impressed.

- Back to Shibuya with Takashi for Shabu-Shabu dinner. Mid-range Japanese beef...truly excellent. Ate way too much.

- Woke up a couple times in the night with indigestion. Why oh why did I eat so much beef?

- Off to Sun KK offices in Yoga for a public techtalk event.

- JRuby presentation wowed attendees...lots of questions after and great discussions.

- Traditional Japanese-style dinner with Takai-san and Ohba-san, plus the excellent Sun KK JRuby enthusiasts.

- Witnessed registration of new jruby-users.jp site and discussed new mascot ideas for JRuby. NekoRuby, perhaps?

- Many new consumption firsts: Hoppy (cheap beer plus shouchu on ice), raw beef, and raw horse. I can check horse off my list.

- Ramen in Shibuya to close out the night.

- Internal presentation on JRuby at Sun KK offices. Slim attendance...there were apparently HR training sessions at the same time. But still fun.

- Big sigh of relief at being done presenting. Lunch at Chinese restaurant with Takashi and said goodbye. どもありがとございます, Shitamichi-san!

- Stopped into hotel to arrange remaining plans for the day.

- Visted Edo-Tokyo Museum in Ryogoku, to see images and artifacts of the old capital. Choked up a bit watching videos of the incendiary bombing raids by the US on Tokyo.

- Headed to Akihabara to meet up with ko1. Wandered around for about an hour before heading up to his "Sasada-lab".

- Mini "JRuby Kaigi" at Sasada-lab. Showed off Duby, JRuby optimizations, and Ruby-Processing demos (plus applets!).

- Off to "Meido Kissa" ("Maid Cafe") with ko1 and other members of ruby-core. Very unusual experience, but entertaining.

- Back to hotel. Considered a trip to my favorite Belgian beer bar in Shibuya ("Belgo"), but I'm plumb tuckered out. Catching up on email, IRC, and writing this post.

- All that remains is getting to Narita and flying home. Hopefully all will go smoothly.

Monday, June 02, 2008

Inspiration from RailsConf

RailsConf 2008 is over, and it was by far better than last year. I'm not one for drawn-out conference wrap-up posts so here's a summary of my most inspiring moments and if applicable how they're going to affect JRuby going forward.

- IronRuby and Rubinius both running Rails has inspired me to finally knock out the last Rails bottlenecks in JRuby. Look for a release sometime this summer or later this fall to be accompanied by a whole raft of numbers proving better performance under JRuby than any other options. Oh, and huge congratulations to both teams, and I wish you the best of luck on the road to running larger apps.

- Phusion's Passenger (formerly mod_rails) has made some excellent incremental improvements to MRI for running Rails. It's nothing revolutionary, but judging by the graphs they've managed 10-20% memory and perf improvements over the next best MRI-based option. We're going to try to match them by more aggressively sharing immutable runtime data across JRuby instances such as parsed Ruby code (which on some measurements accounts for almost 40% of a freshly-started app's memory use). We'd like to be able to say that JRuby is also the most memory-efficient way to run Rails in the near future.

- The Maglev presentation inspired me to dive back into performance. For the most part, we stopped really working hard on performance once we started to be generally as fast as Ruby 1.9. Now we'll start pulling out all the stops and really kick JRuby into high gear.

- Wilson Bilkovitch impressed me most when he used the historically-correct "drinking the Flavor-Ade" instead of the incorrect but more popular "drinking the Kool-Aid".

- Ezra's talk on Vertebra, Engine Yard's upcoming Erlang-based XMPP routing engine, almost inspired me to try out Erlang a bit. Almost. At any rate it sounds awesome...I am all set to write an agent plugin for JRuby when it's released and the protocol is published